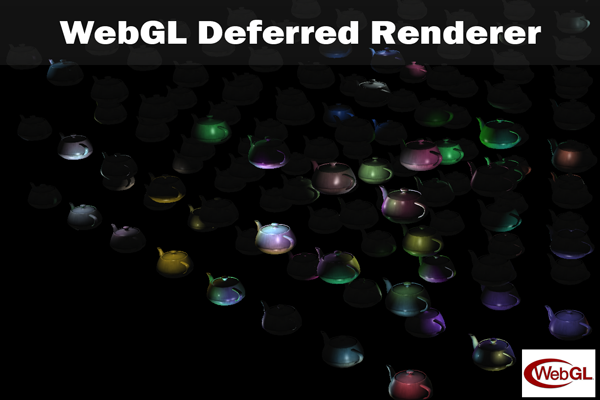

WebGL Deferred Renderer With Custom Javascript 3D Engine

Project Features

Custom Javascript 3D Engine

WebGL 1.0 / GLSL ES 1.0 with 2.0 Supported Extensions

Over 100 Randomly Moving Dynamic Point Lights

FrameBuffer Support with Multiple Render Targets

Three Pass Render Pipeline

Geometry Buffer with Three Render Targets

Light Buffer with Two Render Targets

Supports Diffuse and Specular Lighting

Debug Views For Render Targets

Project Overview

The goal of this project was to explore a Deferred Rendering Pipeline utilizing WebGL 2.0 extensions, many of which are still in development or experimental stages*. I entered unexplored territory in Chrome and Firefox by enabling experimental WebGL extensions and enabling Direct 3D 11 so I could utilize WebGL_draw_buffers. This type of exploratory project serves as a proof of concept tech for how to implement Deferred Shading in WebGL for when it transitions to version 2.0.

* Note: The extension lists can be found in Chrome by typing about:flags into the browser or about:config on Firefox.

Rendering Pipeline

Geometry Pass

The Geometry pass is the first pass in my rendering pipeline. For this pass, The world scene is rendered as normal with the Geometry Buffer's (commonly referred to as the G-Buffer) FrameBuffer bound as the current render target. It consists of three attachments that store RGBA Float values whose contents are denoted in the picture above.* It is important to set the blendfunc to ( ONE, ZERO ) as non alpha data is stored in the alpha channel. No lighting calculations are performed at this stage. In order to capture data to multiple render targets in a GLSL 1.0 fragment shader, three steps must be taken.

- One must call drawBuffers declaring the channels one wishes to output too using a reference to the requested draw_buffers extension while the G-Buffer's FBO is the active FrameBuffer. See GBuffer.js in the code sample at the bottom.

- In the geometry fragment shader, high precision floating point must be used and a pre-processor directive declared to require draw_buffers --- #extension GL_EXT_draw_buffers : require

- Lastly, one can output to multiple channels by indexing gl_FragData as an array. For the G-Buffer, this will be gl_FragData[0], gl_FragData[1], and gl_FragData[2]

See below for an example of the debug views for the G-Buffer render targets.

* It is worth noting that Render Target Three can opt to store depth in a single channel instead of world position in the rgb channels. This leaves room for specular color or other meta data such as the emissive multiplier. Position values can be extracted using the inverse perspective matrix and inverse view matrix with the xy values of the texture coordinates and the texel's depth.

Light Pass

The Lighting pass is performed after the Geometry pass and contains two render targets, one for diffuse lighting and specular lighting accumulation. The L-Buffer's FrameBuffer is bound as the current render target. In this pass, scene geometry is not rendered and instead, geometry representing dynamic lights is rendered. For this demo, I chose to showcase point lights, which are represented by a sphere and have their own shader (See PointLightFragmentShader.glsl in the code samples at the bottom). The motivation for using geometry to represent lights is simple. I want to avoid shading pixels for which the light has no effect. By rendering a sphere with front faces culled, I only perform lighting calculations on texels within the point light's outer radius. The reason this works is the blendFunc is set to ( ONE, ONE ) which accumulates data into the render target buffers instead of writing over it. Each light is iterated over and rendered to the L-Buffer FBO. After all the light data has been accumulated, the debug views for the L-Buffer look as follows

Other types of lights can be represented in this manner as well. For example, a local spotlight would take the form of a frustum and a local ambient light a sphere. Unfortunately, global lights such as a directional light, have no benefit from geometric representation. For this type of light, one must perform lighting calculations on each texel in the render targets. For large render target buffers, this can be an expensive operation. In fact, it might even be better to perform calculations for global directional lights in the geometry pass.

Final Pass

The final pass consists of using the data from Render Targets One, Four, and Five to determine the final texels to output to the screen. A simple quad the size of the screen is rendered using an orthographic projection. In this pass, no custom FrameBuffers are bound and the blendFunc is set to (SRC_ALPHA, ONE_MINUS_SRC_ALPHA). See below for an example of a scene lit with 150 dynamic point lights.

Project Post Mortem

What Went Well

- As this was one of my later projects at The Guildhall, I knew better to focus on debugging tools as early as possible. Within the initial weeks of the project, I focused most of my efforts on integrating WebGL Inspector and debug views for render target data. Even though this slowed down initial progress on the rendering pipeline, it paid huge dividends throughout the project by deepening my understanding of WebGL and squashing bugs in an efficient, timely manner.

- A great side benefit of the project was the concomitant creation of a Javascript 3D Engine. I now have a sandbox for experimenting with WebGL and Web Development in general.

- I was able to prove a Deferred Rendering Pipeline can be implemented in WebGL!

What Went Wrong

- Since I am using many experimental WebGL extensions, documentation was often sparse or not to be found. I spent many hours researching and trying to find the appropriate extensions which would allow me to leverage multiple render targets. This led to a lot of initial frustration and halted progress as it felt like a trial and error process with endless knob tweaking to get WebGL_draw_buffers as an available extension. After browsing the dark catacombs of Google's WebGL bug reports, I finally found the correct set up I would need to run the demo on Chrome. This is a great example where I likely would have sped up the process and evaded frustration by asking the developers themselves.

- I did not anticipate the experimental extensions breaking WebGL debugging tools. Early in development, I integrated WebGL Inspector to assist in debugging my rendering pipeline. I became very dependent on this tool, as it conveniently displayed buffer contents, sequential WebGL calls, and texture data. Enabling the depth buffer extension and draw buffers extensions broke the tool to where it could no longer display any debug data. This meant that midway through the project I had to ponder more creative ways to debug my rendering pipeline. This is often an unspoken downside of using third party libraries for which there is little control.

- I simply ran out of time to integrate my 3DS Max Exporter/Importer with my JavaScript Engine. This was discouraging as it limited the final feature-set and visual demonstration. I had a short time frame to work on this tech demo, and ultimately the goal was a deferred rendering pipeline. So, the exporter/importer had to take a backseat.

What I Learned

- JavaScript is an evolving and very flexible language. With the development of Google's V8 JavaScript Engine, inclusion of typed arrays, and growing third party developer community, JavaScript and WebGL are becoming a viable option for cross-platform game development. I do not see AAA games using WebGL anytime soon, however I do believe we will see many companies use it as an avenue for tools development. It has a long way to go, but it is amazing to compare how far web development has come in the last ten years.

- The garbage collector is often your worst enemy. When doing performance profiling towards the end of the project, often I would notice unpredictable dips in an otherwise steady framerate. These mild dips were occurring with the same magnitude regardless if there were five or one hundred dynamic lights in the scene. As I continue to develop this engine, a good amount of my focus will be on mitigating garbage collection frequency. Some approaches I would utilize are object pooling and avoiding creating objects when possible. This is a prime example of why game developers opt for C++ as managing memory is in control of the developer.